Training Mika-X's Brain: Fine-tuning

Category: AI, Human-Robot Interaction, Machine Learning, Neural Networks, Deep Learning

Today, we'll take a look at the finetuning process, necessary to bump the prediction values.

A couple of early tests gave a results that were pretty disappointing. It was clear that the models lack sufficient data and couldn’t make up its “mind”, e.g. emotion confusion was rampant:

- Neutral face consistently misclassified as "sad" (I know I might not look super cheerful, but c’mon now…)

- Angry expressions often identified as "happy"

- Surprised expressions labeled as "angry"

- Fear expressions rarely detected at all

- Disgust emotion was completely missing from detections

Also, glasses significantly impacted results - "sad" dominated with glasses, "surprised" without them. Additionally, face detection remained unstable, with the green rectangle appearing and disappearing constantly, even in perfect lighting.

Haar cascade parameters I used:

- scaleFactor: 1.05-1.3

- minNeighbors: 3-5

- minSize: (30,30) to (50,50)

Despite parameter tuning, consistent face detection remained elusive.

Regarding voice, every recording, regardless of the actual emotion expressed, was classified as "happy" with high confidence (0.8-1.0). Whether speaking in a tragic, sad, terrified, or angry tone, no matter how exaggerated, the result was consistently the same.

Onto fine-tuning - why personalization matters

Since I can only get so far with FER-2013 and its data quality issues I mentioned in post 1, it was time to prepare additional data sets and re-train the models for face and voice recognition. Fine-tuning (or transfer learning) has a few obvious advantages to it:

1. Individual Expression Patterns: Each person expresses emotions uniquely. If you are training a personal AI assistant,

it is YOUR face that matters

2. Voice Characteristics: Pitch, tone, and speech patterns vary significantly between individuals

3. Cultural and Personal Nuances: emotional expression is deeply personal and culturally influenced

In a a nutshell, fine-tuning works as follows:

1. Load the "Expert" Model: you start with the model that has already learned all the general features from the thousands of generic images/sounds.

2. Freeze the Foundational Knowledge: you "lock" the early layers of the model. These layers have learned to recognize fundamental patterns, like edges, shapes, and textures in faces, or pitch and frequency in audio. This knowledge is universal and extremely valuable.

3. Specialize the Decision-Making: you then train only the final few layers of the model using only your personalized dataset. These final layers are what make the ultimate decision (e.g., "is this face happy or sad?").

To sum up what I am working with:

- Hardware for testing: Standard Logitech 1080p webcam and Maono PD200X microphone on a Windows machine

- Environment: Python 3.9 with TensorFlow/Keras, OpenCV, and Librosa (run via Anaconda)

- Models: CNN for facial recognition (currently: 60.95% accuracy from generic data set), Neural Network for voice recognition (currently: 59.72% accuracy from generic data set)

Facial Data Collection Strategy:

- Equipment: 1920x1080, 30fps phone camera in controlled studio lighting (images will be greatly reduced and turned into

greyscale anyway, so there is no need to go with 4k or any high-definition cameras, as it would only increase file

sizes, without any significant benefits. Lighting and focused footage is more important.)

- Method: 30-60 second videos, 1-3 per emotion, with natural head movement (looking straight, then slightly upwards and

downwards)

- Processing: crop footage to focus on face, square format, export as .png sequences out of Filmora

- Expected Outcome: 500-1,000 good quality frames per emotion

Voice Data Collection Strategy:

- Equipment: Basic recording with pop filter and adjusted environment, using Audacity for processing

- Method: Record 100 different lines per emotion (7 in total: neutral, happy, sad, angry, scared, disgusted and

surprised), acting them out. I decided to leave “calm” (which was used in the initial tests) out of the set, due to

little difference between it and “neutral”. I might add it later on.

- Processing: Extract and export separate audio files as .wav

- Expected Outcome: High-quality, diverse samples capturing personal voice patterns

Expected Improvements after the retraining of the model:

- Facial recognition accuracy: hopefully 80-90% (vs. current 60.95%)

- Voice recognition accuracy: would be nice to get at least 75-85% (vs. current 59.72%)

- Emotion consistency: Dramatic reduction in emotion flipping and false positives

- User satisfaction: More natural and accurate emotional responses

I also intend to use some of the professional video lights for better illumination and see if it helps with face detection issues I had before.

Fine-tuning process:

Once I gathered the additional data sets, both image and audio, I started fine-tuning the models. The first attempt was disappointing. The facial model achieved only 0.2887 validation accuracy, completing only 25 epochs (full "rounds" through all the image data), before giving up. The voice model performed similarly poorly. The models weren't learning effectively from my personal data.

Key Issues Identified:

1. The model architecture wasn't properly leveraging the pre-trained features

1. The model architecture wasn't properly leveraging the pre-trained features

2. Learning rates weren't optimized for fine-tuning

3. Data preprocessing wasn't consistent between training and fine-tuning

Second Attempt: Enhanced Fine-Tuning

I tried a more sophisticated approach with:

For Facial Model:

- Replaced `Flatten()` with `GlobalAveragePooling2D()` to reduce overfitting

- Added a learning rate scheduler for better convergence

- Applied data augmentation only to training data, not validation data

- Increased regularization with L2 penalties

For Voice Model:

- Reduced L2 regularization strength to prevent underfitting

- Added learning rate scheduler

- Increased early stopping patience to allow more training time

- Maintained the smaller batch size which seems to work well

Results for facial model were 57.32% validation accuracy and for voice model: 66.00% validation accuracy. This was SOME progress, but I knew it need to be better.

VOICE MODEL - RISING ACCURACY TO 95% (or so it seemed)

By reduced regularization strength (L2 from 0.01 to 0.003), reducing dropout rates, increasing learning rate slightly (LEARNING_RATE / 15) and increasing early stopping patience, I was able to reach 75% accuracy but wasn't able to move beyond.

Eventually, I created a script to augment my voice data by: adding random noise, shifting audio in time, changing pitch and adjusting speed.

This increased my training data from 700 samples to over 2,800 samples, providing more variety for the model to learn from. I also expanded the feature set from basic MFCCs to include:

- More MFCC coefficients (20 instead of 13) - the most important, standard way to represent the "timbre" or quality of a voice.

- Delta and delta-delta MFCCs - the rate of change (velocity) and acceleration of the MFCCs

- Chroma features - help capture the intonation and pitch profile of the speech in a musically-aware way, which can be a unique identifier

- Spectral features, like centroid (where the majority of the sound's energy is concentrated), rolloff (brightness/skewness of the sound) and bandwidth (how spread out the sound's frequencies are)

- Zero crossing rate (how many times the audio signal crosses the zero-amplitude line, used as indicator of noisiness)

- Tempo and beat features (features that estimate the underlying rhythm, or pace, of the audio

- Pitch (fundamental frequency) features

- Energy features (loudness or amplitude of the signal over time)

- Mel-spectrogram features (a spectrogram with the frequency axis converted to the Mel scale to mimic human hearing)

- Tonnetz features (maps the harmonic content of the audio onto a six-dimensional space. It's used to analyze the tonal relationships in the sound)

This expanded the feature set from 42 features to 131 features, giving the model much more information to work with. I implemented a custom attention layer to help the model focus on the most important features for emotion recognition. This was tricky to implement correctly, but after several attempts, I got it working, and it managed to achieve 0.9575% validation accuracy. Now, that's what I'm talking about! 😊 (little did I know, I was in for a nasty surprise...)

FACIAL MODEL - RISING ACCURACY TO 96% (really?)

Fine-tuning facial model turned out to be significantly more tricky. After several unsuccessful trainings, I tried an ensemble method, and discovered that by training multiple models and combining their predictions, I could get improved performance. For example:

- Model 1: 45% accuracy

- Model 2: 47% accuracy

- Model 3: 61% accuracy (the best so far).

This taught me that different model architectures and feature extraction points could capture different aspects of emotional expression, and combining them led to better overall performance. However, I was stuck ~60% threshold. Eventually, I applied several methods that allowed me to cross that border.

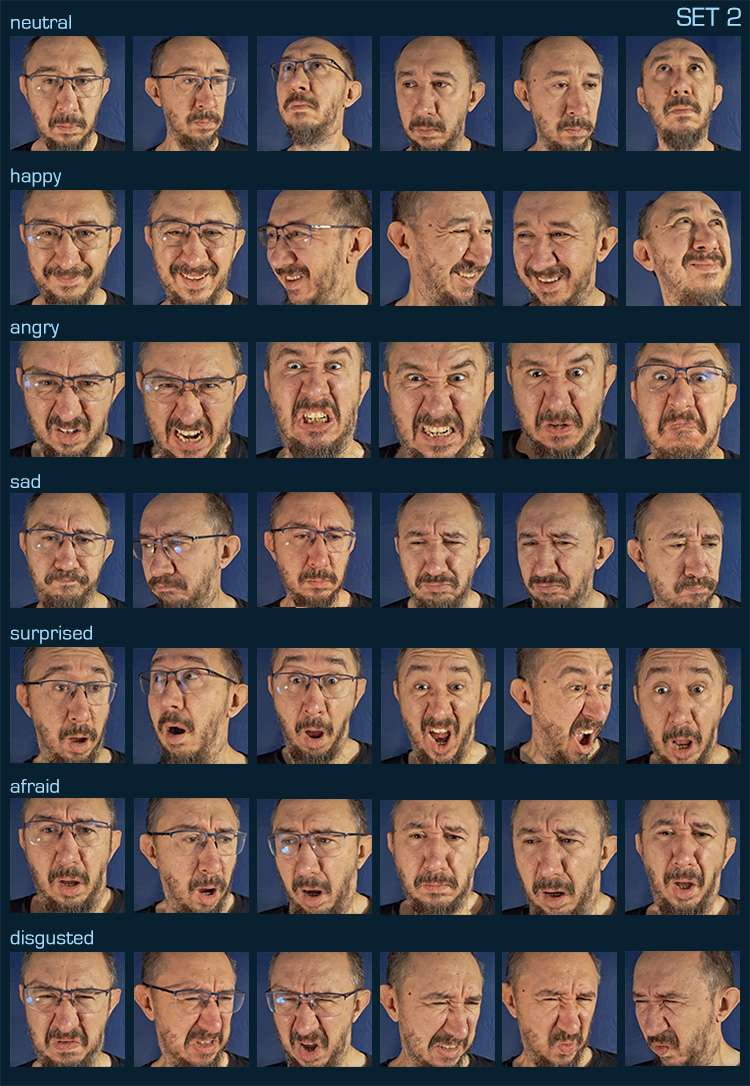

a) Creating additional image sets and adjusting preprocessing

I created a second image set, to augment the initial one, with additional expressions, over-acted in some case for the sake of clarity (e.g. between "angry" and "surprised"), and slightly differently framed, to give the model something extra to work with. I also used the lighting I typically have at my workdesk. I re-run the preprocessing, having all the images greyscaled, resized to 48x48, equalized and saved as NumPy arrays.

b) Adjusting data augmentation

I also found out that my initial attempts with aggressive data augmentation actually hurt performance, dropping accuracy significantly. Through experimentation, I found that a more conservative approach worked much better, e.g.

- rotation_range=10 (reduced from 15)

- width_shift_range=0.1 (reduced from 0.15)

- height_shift_range=0.1 (reduced from 0.15)

- zoom_range=0.1 (reduced from 0.15)

c) Addressing class imbalance

I discovered that my dataset had imbalanced representation of different emotions. Using scikit-learn's class_weight functionality helped address this. This ensured the model paid appropriate attention to underrepresented emotion classes.

d) Advanced CNN Architecture

Moving beyond simple fine-tuning, I developed a more sophisticated CNN architecture inspired by successful facial recognition models. The architecture used three convolutional blocks with increasing filter sizes (64, 128, 256), batch normalization for stability, and dropout for regularization.

These implementations allowed me eventually to achieve ~71% accuracy. Not too bad, but I wanted more.

Going back to ensemble models

Having feeling ensemble models are the key, I used the 71% model to train some more, like so:

- Model 1: The most successful architecture (0.7148 accuracy)

- Model 2: Deeper architecture with more filters and slightly higher learning rate

- Model 3: Wider architecture with more filters in early layers and lower learning rate

Ensemble method was simple averaging of predictions from all models and creation of a single ensemble model that can be saved and loaded. It worked pretty well, first I got ~75% and eventually ~83%, with one model achieving on its own 0.8656 accuracy. I decided to use it for the final refinement. It had these characteristics that likely contributed to its better performance:

- Deeper architecture with more filters (64, 128, 256)- More dense units (512, 256)

- Slightly higher learning rate (0.0002)

This suggested that my dataset benefits from a model with more capacity to learn complex patterns. I created a script to train six variants of the most successful model with slight modifications to the parameters: varying the number of filters, adjusting the learning rate, modifying the dropout rates and changing the number of dense units. The script performed the following:

v1: Reproduced the original model (64, 128, 256 filters)

v2: Increased filters to (80, 160, 320)

v3: Increased learning rate to 0.0003

v4: Reduced dropout rates

v5: Increased dense units to (768, 384)

v6: Combined improvements from v2, v4, and v5

The most successful one turned out to be v3, which resulted in a whooping 0.9607 validation accuracy, suggesting that the original learning rate (0.0002) was still too low for optimal training. I’ll take that, but we need to see how it will perform "in the field".

Key Lessons Learned

1. Ensemble Methods Work: Training multiple models with different architectures and combining their predictions can

significantly improve performance.

2. Feature Engineering is Crucial: For voice emotion recognition, expanding the feature set made a huge difference. More

features give the model more information to distinguish between emotions (or so it seemed)

3. Data Augmentation Helps: Especially for voice data, augmenting the training samples with various transformations

helps the model generalize better

4. Attention Mechanisms are Powerful: Custom attention layers can help models focus on the most relevant features for

the task at hand.

5. Patience and Persistence Pay Off: Fine-tuning models is an iterative process, which takes time. Each attempt taught

me something new, even when it didn't immediately improve results (or even went backwards).

6. Voice vs. Facial Recognition Differences: Voice emotion recognition is inherently more challenging than facial

recognition and requires different techniques to achieve good results.

While I'm pleased with these results, there's always room for improvement:

1. For the facial model, I'd like to experiment with more sophisticated ensemble methods and potentially try progressive

unfreezing of the base model layers.

- Ensemble of Top Models: Combine the best model (0.9607), second best (0.9443), and the third (0.9049) for potentially

even higher accuracy.

- Fine-tuning: Try training the best model for a few more epochs with an even lower learning rate to see if it can

improve further.

- Data Augmentation: Experiment with slightly more aggressive augmentation now that you have a strong base model.

- Test Time Augmentation (TTA): Make predictions on multiple augmented versions of the same input and average them.

2. For the voice model, I could explore more advanced architectures like LSTMs or transformers that might better capture

the temporal aspects of speech.

3. I'm also interested in combining the facial and voice models at a deeper level, rather than just combining their

predictions.

For now though, with 96% facial and 95% voice recognitions, I was ready to put them to the test! (...and receive a cold shower 🥺). Cheers!

- Adam